Coded Bias

(USA, 84 min.)

Dir. Shalini Kantayya

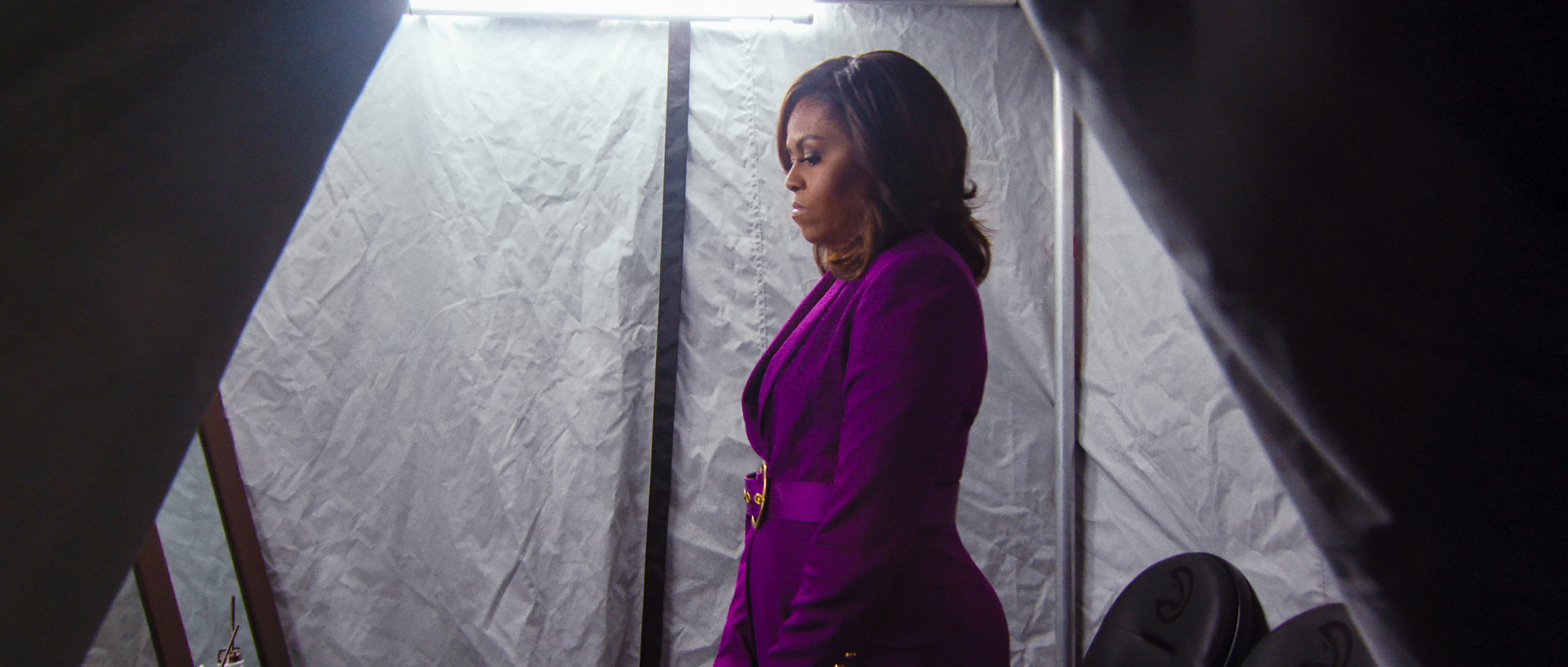

When George Orwell penned the idea of Big Brother in 1984, he never indicated if the figure was (at some point) a character or merely a symbol. Either way, however, one could fairly assume that Big Brother is a white guy. Shalini Kantayya’s eye-opening documentary Coded Bias examines the inherent discriminatory practices in surveillance technology and algorithms. Orwell’s concept of Big Brother and the panopticon is now reality with all-seeing eyes tracking citizens’ moves worldwide. The doc investigates how the data sets, coding, and algorithms that guide these systems of mass surveillance are overwhelmingly created by white men, however, and fail to recognize distinctions of race and gender, as well as the subjective variables such factors introduces. Coded Bias is a revealing study of Big Brother’s pervasive reach, which extends far greater than Orwell could have imagined.

The key research that fuels Coded Bias comes in the story of Joy Buolamwini. A PhD candidate at the MIT Media Lab, she recalls struggling to realize an idea for a simulation that required facial recognition software. Buolamwini, who is Black, couldn’t be detected by the technology until she covered her face with a white mask. For people like her who are “rich in melanin,” as the higher-ups of the big tech companies say, machines don’t recognize their existence. Buolamwini’s research uncovers how the data sets on which much facial recognition software coding is based draws from photos of white people, mostly men. This flaw reflects unconscious bias as the white guys writing the code simply create a pool of data based on people who look like them.

The implications of this gap reach far beyond a simple social media filter like the one Buolamwini was trying to create. Coded Bias observes the discriminatory nature of this bias in action when Kantayya’s cameras go to London, England, which is notoriously gaining a reputation as one of the most heavily monitored cities in western society. The images are jarringly chilling. Coded Bias witnesses a group of activists on the street, informing passersby that a van parked up the road is employing facial recognition software. One man, not wanting to have his face documented, shields himself from the camera. Officers swarm him and lay a fine. A teenage boy is misidentified as a criminal and is subjected to unnecessary interrogation and detainment. While Coded Bias offers images that shock and startle, Kantayya shows firsthand how facial recognition software is often faulty and inaccurate.

Unconscious bias in algorithms further widens socio-economic disparities. These algorithms are what subject Cathy O’Neil calls “weapons of math destruction.” They’re predictive formulas used, at least in western society, to achieve targeted marketing clusters. This is the sort of thing where postal codes and online browsing habits might leads one person to receive ads for usurious moneylenders while others receive ads for premium toilet paper. Coded Bias looks at how algorithms inevitably prey on the needy from shopping profiles to probationary outcomes. One interview explains how the terms of her parole tightened after she got a job and received commendations from two public officials. A decorated teacher tells how he nearly lost his job following an automated review. These algorithms based on pre-set variables remove the element of human subjectivity from the decision-making process.

Coded Bias presents many examples that are concerning. Appropriately, though, Kantayya lets humans be the voice of reason. Drawing upon first-hand experiences of discrimination and undue hardship, Coded Bias makes a compelling case that the luxuries of automated technology don’t outweigh their side effects. Many tech-savvy docs have tackled the extreme privacy concerns raised by the internet and social media, but Coded Bias is one of the stronger ones. Kantayya’s film, while overtly about technology, offers a greater essay on gatekeeping and decision-making. While advocating for greater regulation of facial recognition software, Coded Bias also wins the argument for more inclusive practices across all levels of hiring and governance.

Coded Bias is now streaming at Hot Docs Ted Rogers Cinema.